Discovery & Insights

09 Jul 2023 » Platform

Having lots of data in a data lake sitting idle is a waste of resources. If you store data, it is because you want to take advantage of it. If you have no use for some data, you should, at the very least, archive it, if not completely delete it. Let’s see a few options of what you can do with your Data Lake.

What’s in a name

You may be wondering why I chose the title for this post. In a previous one on reporting vs analysis, I wrote about the importance of both generating reports for upper management and data analysis to find gold in your own data. Also, remember the definition I gave to the Data Lake:

Storage of data for analytical workloads.

In summary, the Data Lake was explicitly designed to be the place where you store data to discover hidden gems and find unknown insights about your business.

Query Service

Adobe Experience Platform (AEP) offers a service to access the data in the Data Lake using SQL. We call it Query Service. This does not mean that the Data Lake is based on an RDBMS; it is just a convenient way to access the data using a query language that is the standard in the market. You access the service using either the UI or any PostgreSQL-compatible application, as I explained in this post: Access AEP as a Database.

Query Service supports the following features:

- ANSI SQL. Let’s not overcomplicate things and use a recognized standard.

- Spark SQL functions. While the ANSI SQL standard is great, it is very generic. Additional functionality is often needed, which is why these functions were added.

- Adobe-defined functions. Finally, given that there are some AEP-specific features in the data that are not offered by any standard or library, Adobe has added functions specific to AEP.

Depending on your license, you will be entitled to a simple version with limitations or the full capability. Check the Query Service packages page to understand the differences.

A couple of clarifications before I continue:

- As with all data lake technologies, Query Service is not real-time. If you have real-time requirements, you will need to find another solution.

- There is no way for Query Services to interrogate the Real-Time Customer Profile; it can only access the Data Lake.

Ad hoc queries

All AEP customers have access to this capability. This is what I referred above to when I said “simple version”. With it, you can run SQL SELECT queries to interrogate the data. No other SQL command is available and the output must be either a simple result (like a COUNT) or sent outside of AEP.

Although this is the limited version of Query Service, it still can be used for interesting use cases:

- Generic data analysis. I know, this is a very generic statement. What I mean is that you can run any useful SQL SELECT command against your data, as long as it complies with the limitations of ad hoc queries.

- Data validation. I want to spend a bit of time here, as this is a very important use case. Before you enable for profile any dataset, you should do extensive validation tests on the ingested data. Our experience shows that you will need a few iterations until you get it right. Once you are confident that the data is correct and clean, you delete the dataset, create a new one, enable it for profile, and ingest the source data once again. This is Adobe data architect’s standard practice.

- Evaluate segment definitions. Creating a segment in the UI is simple, but testing it is not that simple. If you want to confirm that the segment gets you the right audience, you can first run an equivalent SQL query and check the results.

Batch queries

If you feel like the power of Query Service is what you need, but ad hoc query limitations prevent you from achieving your goals, the package Data Distiller removes these limitations. This new additional capability is called batch query. With it, you get two important features:

- Create datasets as the output of a SELECT. You will also see this feature named Create Table As Select or CTAS. With it, you can write a SELECT query, whose output is stored in a new dataset.

- Schedule queries.

I am sure you can immediately think of queries that you would like to run with these additional features. In case you need some ideas, here you have a few:

- Post-ingestion data preparation. There are many cases where the data you get from your data sources is not exactly what you want. While it is preferred that you do these transformations using ETL tools or data prep functions, it may not always be feasible. If you need to apply complex transformations to your source data, batch queries can come to the rescue, as long as you can write the transformation in a SQL query.

- Derived attributes. In other cases, you may need to generate new profile attributes, which require a combination of various data sources to calculate them. Again, a JOIN SQL query is what you need.

- Data filtering. If your data source sends too much data but you only need a fraction of it, a batch query can create a new dataset with only the data you need. This can be useful to avoid overloading the Real-Time Customer Profile.

Intelligence & AI

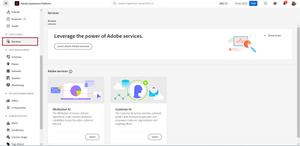

It is 2023 and everybody is talking about AI. Unless you have been living in a cave, you should know that Adobe has been offering multiple AI/ML features across its different tools. However, Adobe takes a different approach to AI. Instead of offering a generic engine that you can program, Adobe’s tools offer AI/ML capabilities ready to be used by the final users. In the case of AEP, these users are the marketers. So, do not worry if you do not fully understand how neural networks work, you do not need to.

AEP currently offers all customers the following models:

- Attribution AI. If you are familiar with Adobe Analytics, you will remember that, initially, it only offered first- and last-touch attribution models. Over time, other attributions models were included in the tool, like U-shape or J-shape. Well, Attribution AI is an attribution algorithm based on AI/ML. The goal is to do the best attribution of a conversion to all marketing channels that contributed to that conversion.

- Customer AI. This feature creates propensity scores, like churn.

The way these capabilities work is by getting the input of the datasets you configure and outputting the results to a new dataset, very similar to what I explained above with batch queries.