Clickstream Data Feed

One trend that I have seen in the last few years is the interest of my customers in getting the raw data out of the Adobe Marketing Cloud. More and more corporations are hiring data analysts and these people want all the data they can get. Using various tools (R, Hadoop, Data Workbench…), it is possible to dig deeper into the data to uncover hidden gems or create more sophisticated reports. Today I will explain the raw data from Adobe Analytics, the clickstream data feed.

Marketing Cloud ID Service

[UPDATE] Since I wrote this post, the services has been renamed to Experience Cloud ID (ECID) Service. The contents of this post are largely outdated, but I am keeping it for historical purposes. The Marketing Cloud ID (MCID) Service enables most Adobe Experience Cloud solutions to uniquely identify a visitor. It is the basis of the people identification, as I explained a few weeks ago. But it does not stop here; it provides the foundations for the People core service (aka Profiles and Audiences), which, in turn, provides customer attributes and shared audiences.

Processing Rules

Processing rules are basic if-then-else statements to perform minor manipulations of the data. They were added mainly to map context data variables into Analytics variables. However, you can also use them in simple cases instead of a VISTA rule. With processing rules, you can concatenate, copy or set values in Analytics variables. They must not be confused with Marketing Channels processing rules, which are specific for Marketing Channels.

ID sync

When I was following the Adobe Audience Manager training, I remember that one of the topics I found most difficult to understand was ID syncing. The enablers spent a lot of time using these words and I could see that it was a key part of any Data Management Platform (DMP). Once I finally understood what it meant, I felt relieved. Today I will explain this concept, in case you are also stuck.

People Identification

One of the main challenge, if not the most important, of digital marketing is to be able to identify real people. This is the key to marketing objectives like “360-degree view of the customers” or “single view of consumers”. The problem is that web analytics tools track only visitors, so we need to find a way to be able to perform this people identification. Let me explain what options do we have.

Cookies: Back to Basics

I must admit it: I love cookies. I can eat one cookie pack in a couple of days. Therefore, I try to keep my kitchen free of cookies. However, this is not what I am going to explain here. Today I am going to take a step back and, instead of advanced topics, I want to review a basic concept: cookies. I know most of you know fairly well what cookies are. However, if you are still trying to get your head around cookies, I recommend you keep on reading. You might also find useful ideas to explain cookies to other people.

Share Segments with the Marketing Cloud

If you have followed my previous two posts, you should understand by now the basics of Analytics segments and its containers. I could write more about the creation of the segments, but today I want to explain one of the features that make the Adobe Marketing Cloud a multi-solution offering: share segments. With this feature, you can create a segment in Adobe Analytics and use it in other solutions, like Audience Manager or Target.

Adobe Analytics Segment Containers

In my last post about the basics of Analytics segments, I briefly touched upon the segment containers: hit, visit and visitor. However, I remember how long it took me to understand them initially. And not only me; some of my clients did not find it easy to learn exactly how the different segment containers work. I have decided to explain it so you can understand them once and for all, if you still struggle with them.

Adobe Analytics Segments - The Basics

Those of you who have been long enough in the Web analytics market, will remember that in old version of SiteCatalyst, there was no concept of segmentation. As an alternative solution, you could use ASI slots, DataWarehouse segments or VISTA rules. However, these solutions were clunky, rigid and, sometimes expensive. The Adobe Analytics segments as we now them today come from the release of SiteCatalyst 15. Initially, the tool was still immature, but over time, it has become more sophisticated and it is still evolving. In this post, I will be covering the very basics of segment creation in Adobe Analytics.

Leveraging second party data in AAM

In an Adobe Audience Manager implementation, the first and most important data source is the data you already own. Then, when no more juice can be squeezed from first party data, we switch to purchasing third party data. Finally, in some cases, we go beyond and look for second party data. Today, I will focus on this last resort, which can be more interesting than what it initially looks like.

Reporting on multiple currencies

Adobe Analytics is very good at reporting on revenue. This metric can be used together with virtually any dimension. You get both granular and high-level views of revenue and you can even track multiple currencies, in case you sell in various regions with different currencies. However, there is one limitation: it is not possible to report on multiple currencies; the reports only show the report suite’s currency. But not all is lost; it is possible to get multiple currencies with a specific implementation, which I am going to show you next.

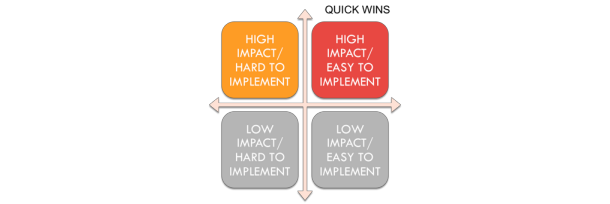

Marketing Cloud Quick Wins

Imagine the following situation. You are working as a Multi Solution Architect, specialised in the Adobe Marketing Cloud. A big company, which has never used Adobe’s products, has purchased most or all Adobe Marketing Cloud products. Your task is to lead the implementation of the project and put together all products in a way that delivers maximum benefits for the customer. What do you do next?

Business vs technology

When I was in my last year of University, I had a project management subject. I was studying Electronic Engineering at the time. The teacher had a great deal of experience in projects and he also had a technical background. During one of the lessons, he made a statement that struck all of us in the classroom. He said that, in order to progress in our career, at some point in time we would have to give up technology and move to the business side. I still remember my internal reaction: I did not like it. I think my point of view was shared by many of my classmates by the rumours.

Share Audience Manager Segments in Adobe Target

[UPDATE 29/10/2017] As Javier has pointed out and after some internal checks, there is no destination any more nor any mappings. Nothing is wrong with it, as it will still work as expected. Just ignore the section “Check mapping in AAM”. Thanks Javier! Adobe is now selling a Marketing Cloud. You can still get a license for individual products, but the moment you have two or more, you should connect them together. Today I am going to explain how you should connect Adobe Audience Manager and Adobe Target. The use case is very simple: you want to use AAM segments to create personalisations through Target. And you want this segment sharing to happen in real time: as soon a user qualifies for a segment in AAM, you want to be able to use it in Target.

Provisioning Shared Audiences and A4T

[UPDATE 19/11/2022] I recently revisited this post, which was written 6 years ago, and realized that it is no longer valid. I am keeping it for historical reasons, but you should not follow it. I believe that this provisioning is currently done automatically. Some screenshots and links are also obsolete. In a previous post, I explained what Analytics for Target (A4T) was and how to use it. However, I did not explain how to get provisioned for A4T. In this post, I will explain what you need to request the provisioning for shared audiences and A4T. Although these two features are different, the provisioning form is the same. In fact, you can request both at the same time. One word of caution. I am not going to explain here what are the consequences of this provisioning. Therefore, only place this request once you know you need any of the two features (or both). Otherwise, I would suggest you refrain from requesting them, just for the sake of having them.

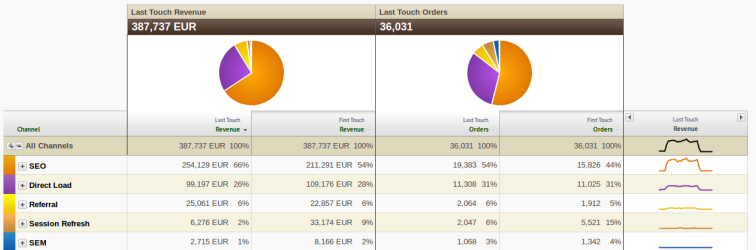

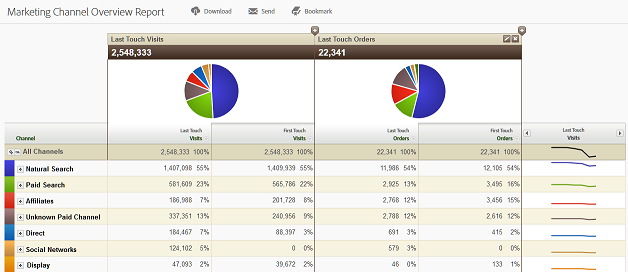

Marketing Channels Reports

This is the last post of a mini-series on Marketing Channels. By now, you should understand the basics of Marketing Channels, how to mange the channels and how to create new rules. All of this process is necessary to reach the final point, when you can finally use the data for reporting. This is the moment you have been waiting now for some time: use the data for something useful.

Marketing Channels Processing Rules

I am continuing diving into the details of the Marketing Channels tool. So far, I have given an initial introduction to Marketing Channels and explained the Marketing Channels Manager. Today’s post is going to be the most technical of all of them, although I am not going to reference any single line of code. I will explain how to set up the Marketing Channels Processing Rules and their order to get the results that you are expecting.

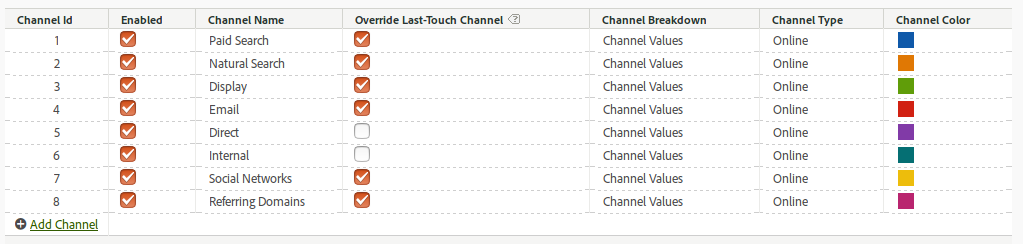

Marketing Channels Manager

This is the second article about Marketing Channels that I started two weeks ago, with an introduction to Marketing Channels. Today, I will go, step by step, through the process of setting up this tool. Initially, I thought about explaining in one single article how to create the channels and how to configure them. However, I prefer to write shorter posts with more concise information rather than very long articles. So, in in this post, I will concentrate on the initial steps with Marketing Channels.

Marketing Channels Fundamentals

With this post, I am starting a mini-series on Adobe Analytics Marketing Channels. I will be explaining what they are, how to set them up and how to use them in your reports. A quick Google search shows a number of results, including the official documentation, but I want to give a more comprehensive view on them. In this first post, I will get into the fundamentals of Marketing Channels.

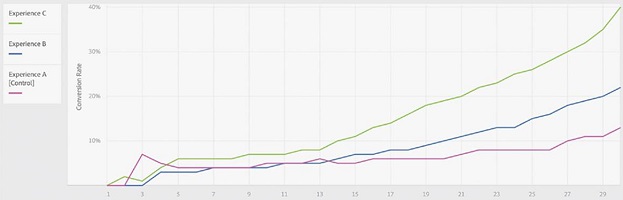

Analytics For Target (A4T)

One of the buzzwords in the Adobe Marketing Cloud environment for the last year or so has been “Analytics for Target” or A4T for short. It basically means using Adobe Analytics as the reporting tool for Adobe Target activities/campaigns. Why so much excitement about it? If you are optimising/personalising the website with Adobe Target and you have presented your reports to other people in your organisation, and these other people have access to Adobe Analytics, I am sure you have received the following question: why does the visitor count not match between the two tools? Typically, the first answer that comes to mind is that Adobe products are broken. I wonder how many Adobe customers have raised a ticket through client care. The answer requires a bit of understanding: each tool counts the visitors differently and there is a reason for that.